Iclr2020: Compression based bound for non-compressed network: unified generalization error analysis of large compressible deep neural network

By A Mystery Man Writer

Iclr2020: Compression based bound for non-compressed network: unified generalization error analysis of large compressible deep neural network - Download as a PDF or view online for free

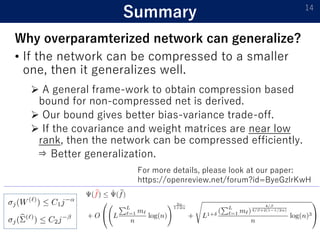

1) The document presents a new compression-based bound for analyzing the generalization error of large deep neural networks, even when the networks are not explicitly compressed.

2) It shows that if a trained network's weights and covariance matrices exhibit low-rank properties, then the network has a small intrinsic dimensionality and can be efficiently compressed.

3) This allows deriving a tighter generalization bound than existing approaches, providing insight into why overparameterized networks generalize well despite having more parameters than training examples.

ICLR 2020

Iclr2020: Compression based bound for non-compressed network: unified generalization error analysis of large compressible deep neural network

Entropy, Free Full-Text

Taiji Suzuki, 准教授 at University of tokyo

Iclr2020: Compression based bound for non-compressed network: unified generalization error analysis of large compressible deep neural network

Entropy, Free Full-Text

PDF) MICIK: MIning Cross-Layer Inherent Similarity Knowledge for Deep Model Compression

PDF) Efficient Visual Recognition with Deep Neural Networks: A Survey on Recent Advances and New Directions

ICLR 2020

ICLR 2020

- Al Non-Tension Compression Joint for Sectorial Conductor According to DIN - KFAR MENACHEM

- Zocks Non-Compression-Eggplant

- 12-10 AWG NON INSULATED 1/4 COMPRESSION TERMINAL SLIP ON - FEMALE – A&R Supply - Air Conditioning & Refrigeration Wholesaler

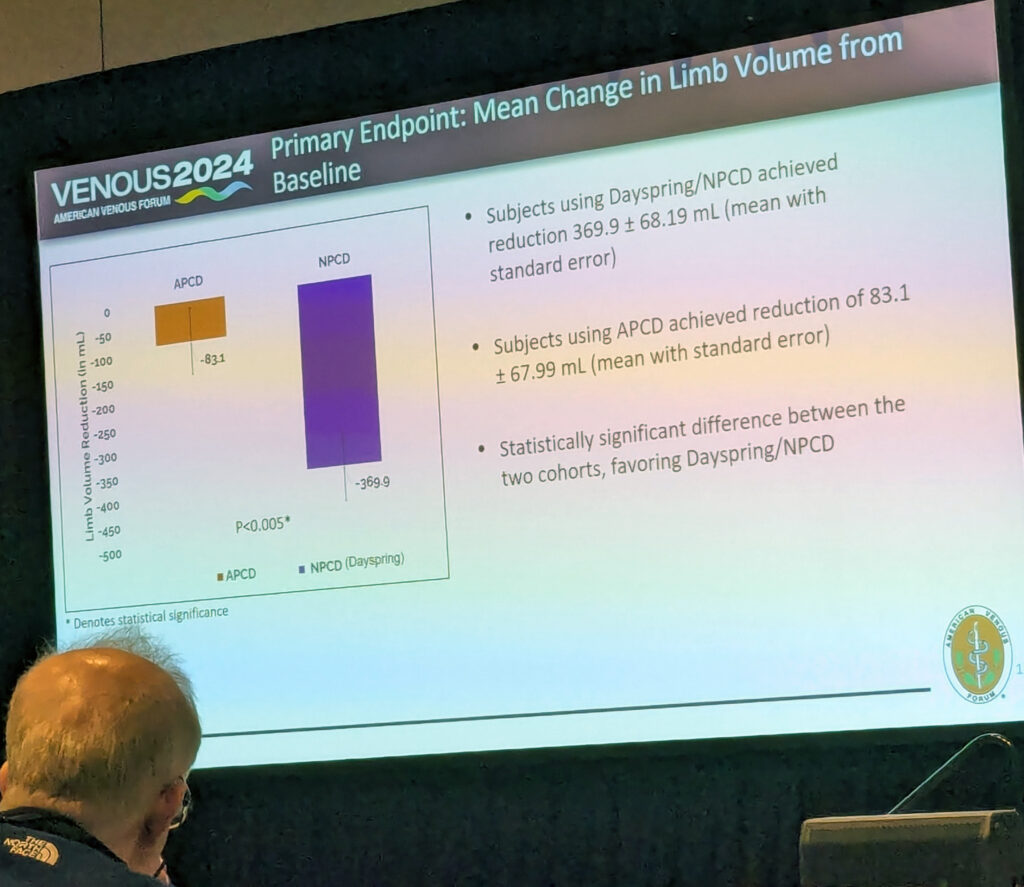

- Portable non-pneumatic compression device shows superiority over

- Non Return Single Check Valve 22mm Compression - 07001400