What is supervised fine-tuning? — Klu

By A Mystery Man Writer

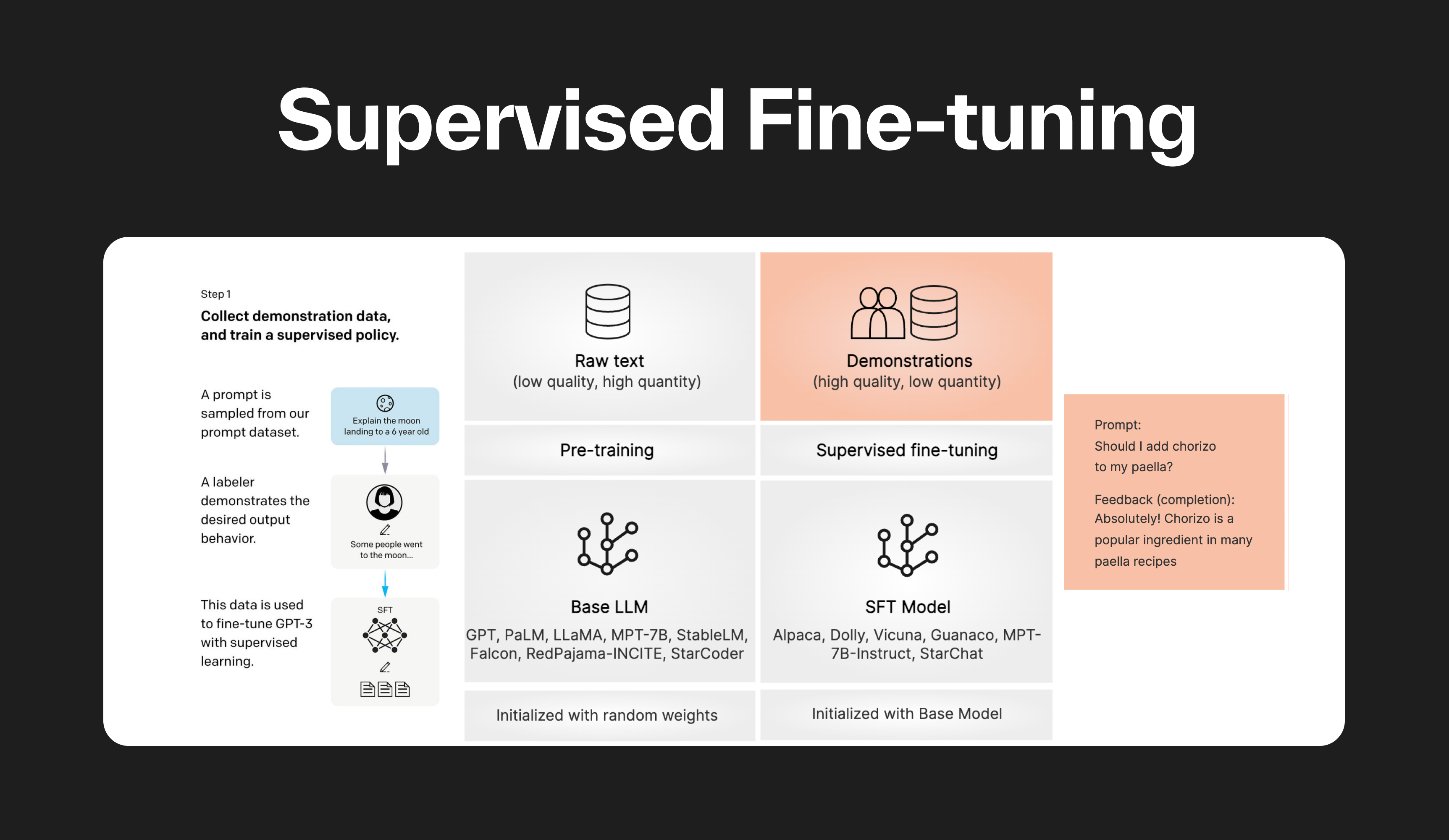

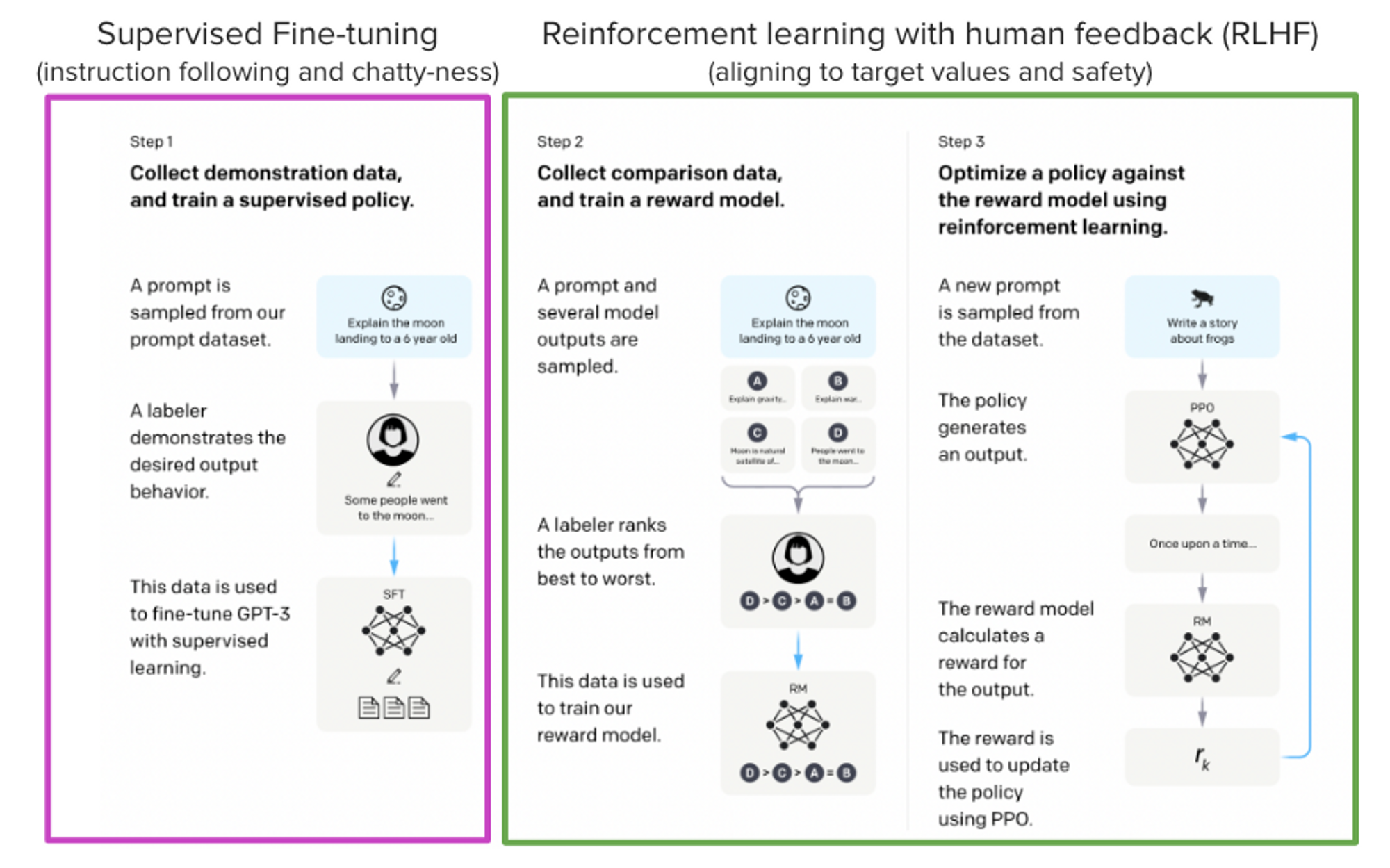

Supervised fine-tuning (SFT) is a method used in machine learning to improve the performance of a pre-trained model. The model is initially trained on a large dataset, then fine-tuned on a smaller, specific dataset. This allows the model to maintain the general knowledge learned from the large dataset while adapting to the specific characteristics of the smaller dataset.

Bringing LLM Fine-Tuning and RLHF to Everyone

AI in the News: Alignment, Doom, Risk, and Understanding — Klu

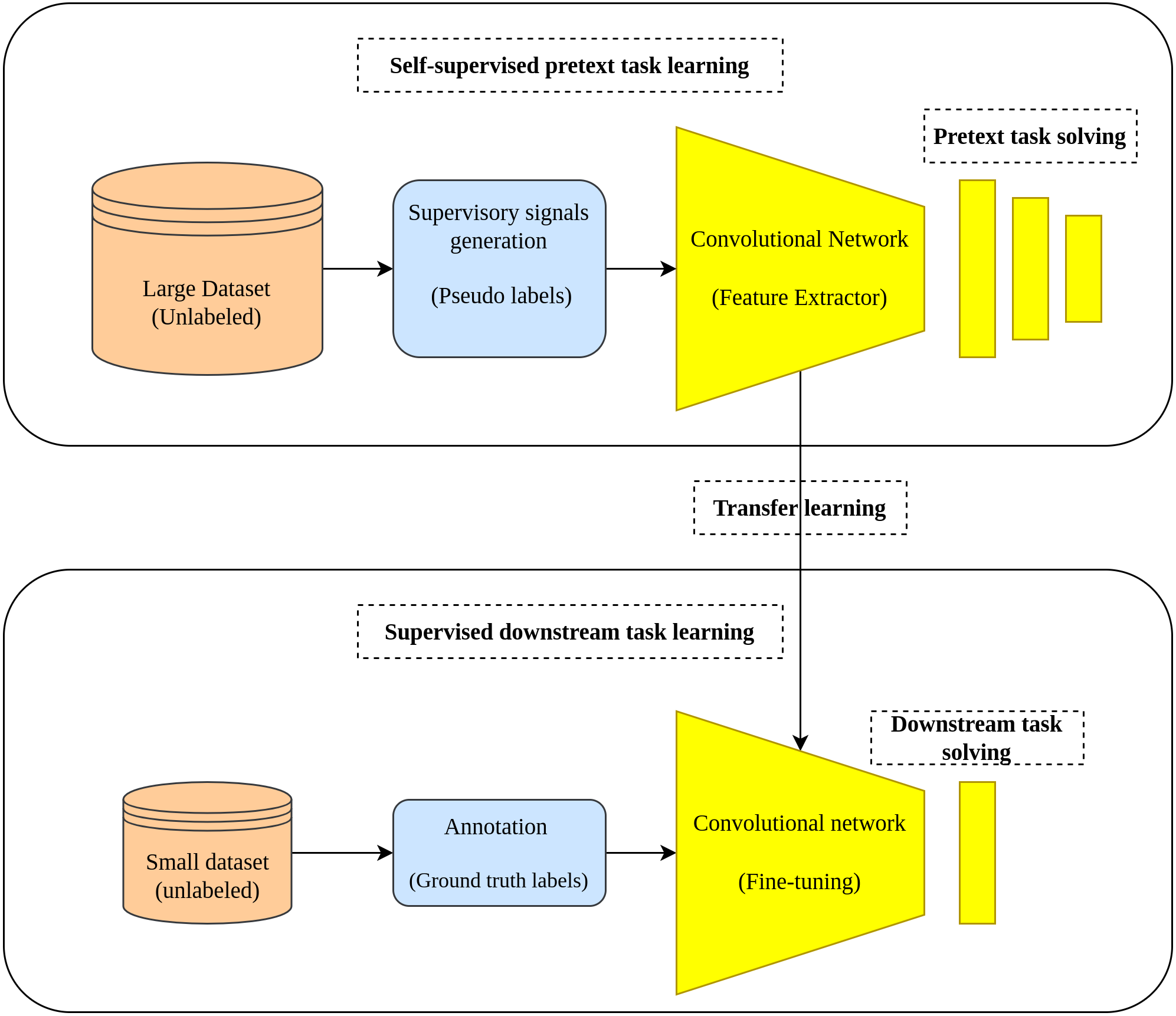

Evaluation of Effectiveness of Self-Supervised Learning in Chest X

A) Fine-tuning a pre-trained language model (PLM)

LLM Fine-tuning: Old school, new school, and everything in between

CoFRIDA: Self-Supervised Fine-Tuning for Human-Robot Co-Painting

Supervised Fine-tuning: customizing LLMs

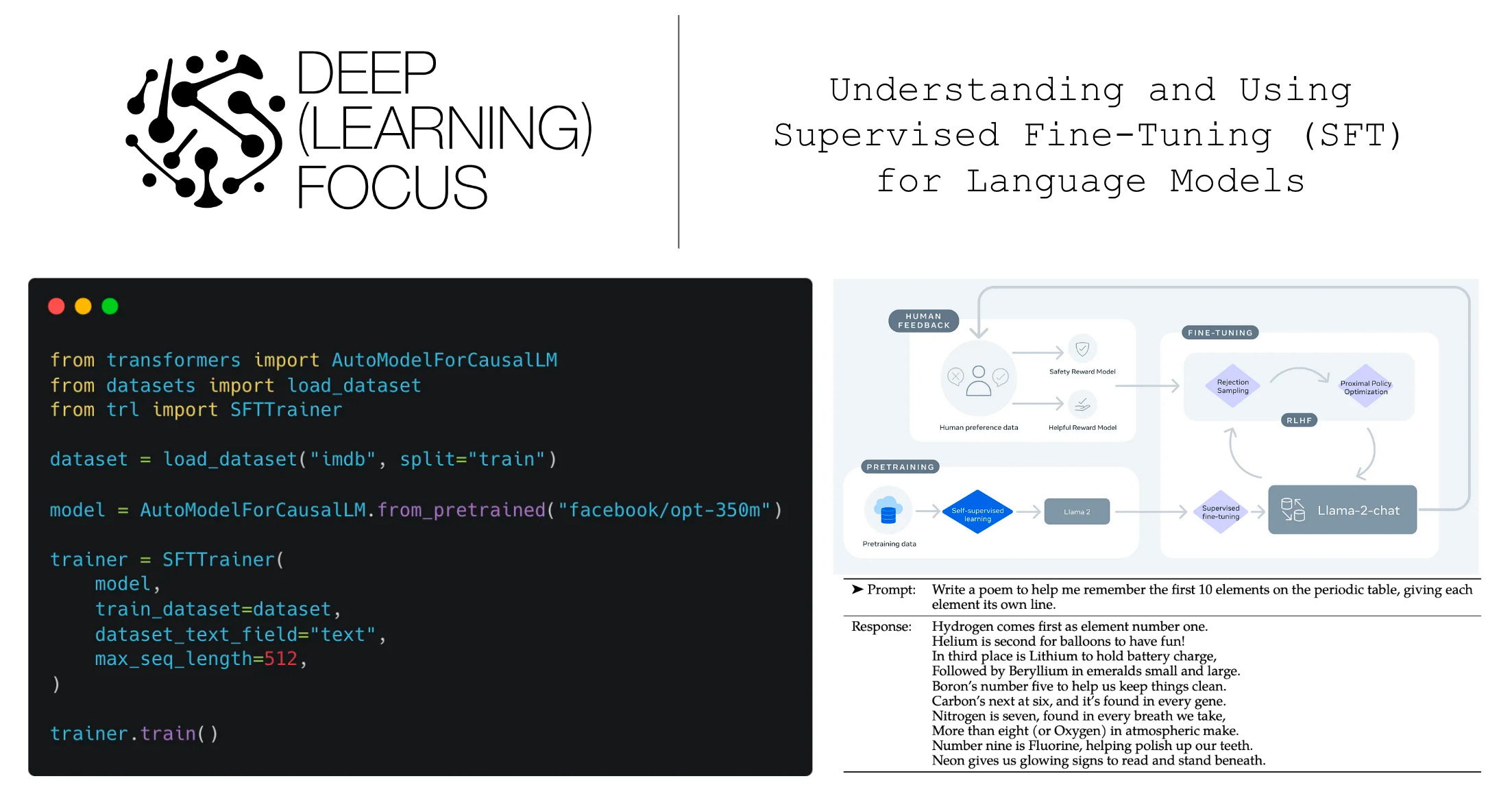

Understanding and Using Supervised Fine-Tuning (SFT) for Language

JSAN, Free Full-Text

Self-supervised learning methods and applications in medical

Fine-Tune XLSR-Wav2Vec2 for low-resource ASR with 🤗 Transformers

Fine-Tuning LLMs With Retrieval Augmented Generation (RAG)

Supervised Fine Tuning

- What is LLM Fine-Tuning? – Everything You Need to Know [2023 Guide]

- 14.2. Fine-Tuning — Dive into Deep Learning 1.0.3 documentation

- How to Fine-tune Llama 2 with LoRA for Question Answering: A Guide

- Fine-tuning a Neural Network explained - deeplizard

- How to Finetune Mistral AI 7B LLM with Hugging Face AutoTrain - KDnuggets