Iclr2020: Compression based bound for non-compressed network

By A Mystery Man Writer

Iclr2020: Compression based bound for non-compressed network: unified generalization error analysis of large compressible deep neural network - Download as a PDF or view online for free

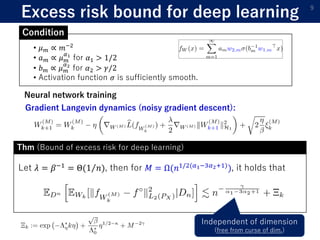

1) The document presents a new compression-based bound for analyzing the generalization error of large deep neural networks, even when the networks are not explicitly compressed.

2) It shows that if a trained network's weights and covariance matrices exhibit low-rank properties, then the network has a small intrinsic dimensionality and can be efficiently compressed.

3) This allows deriving a tighter generalization bound than existing approaches, providing insight into why overparameterized networks generalize well despite having more parameters than training examples.

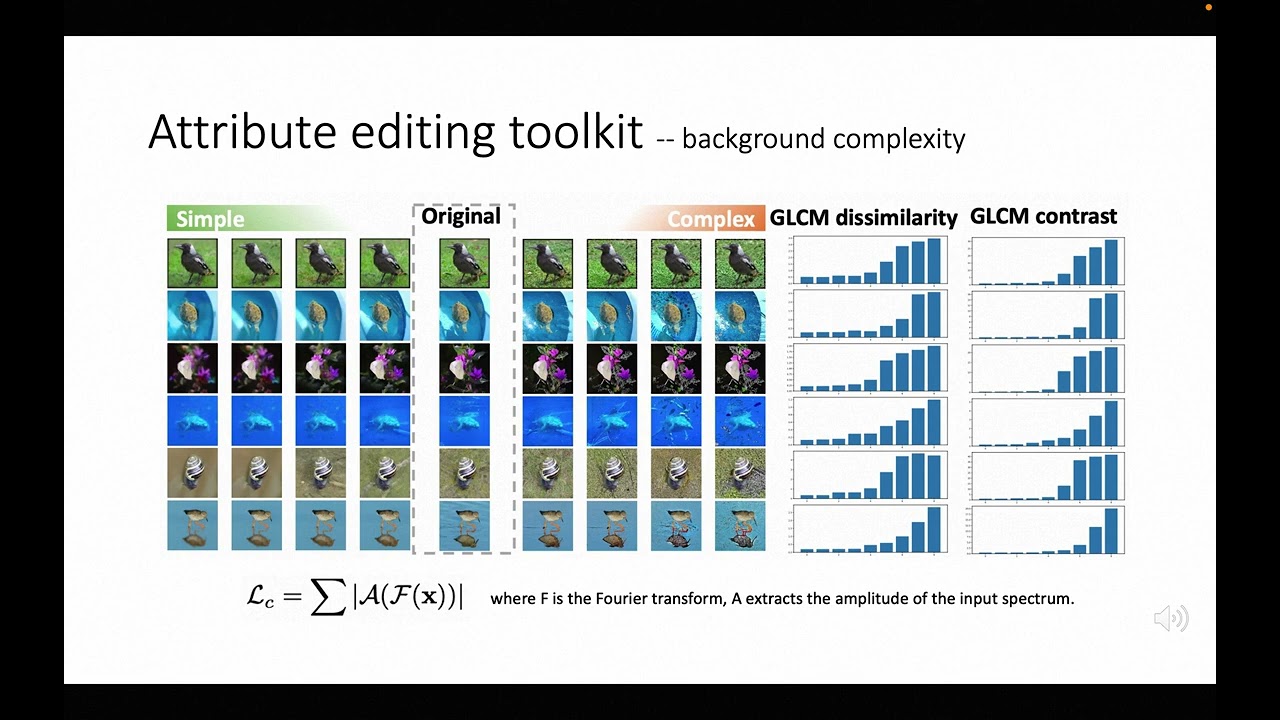

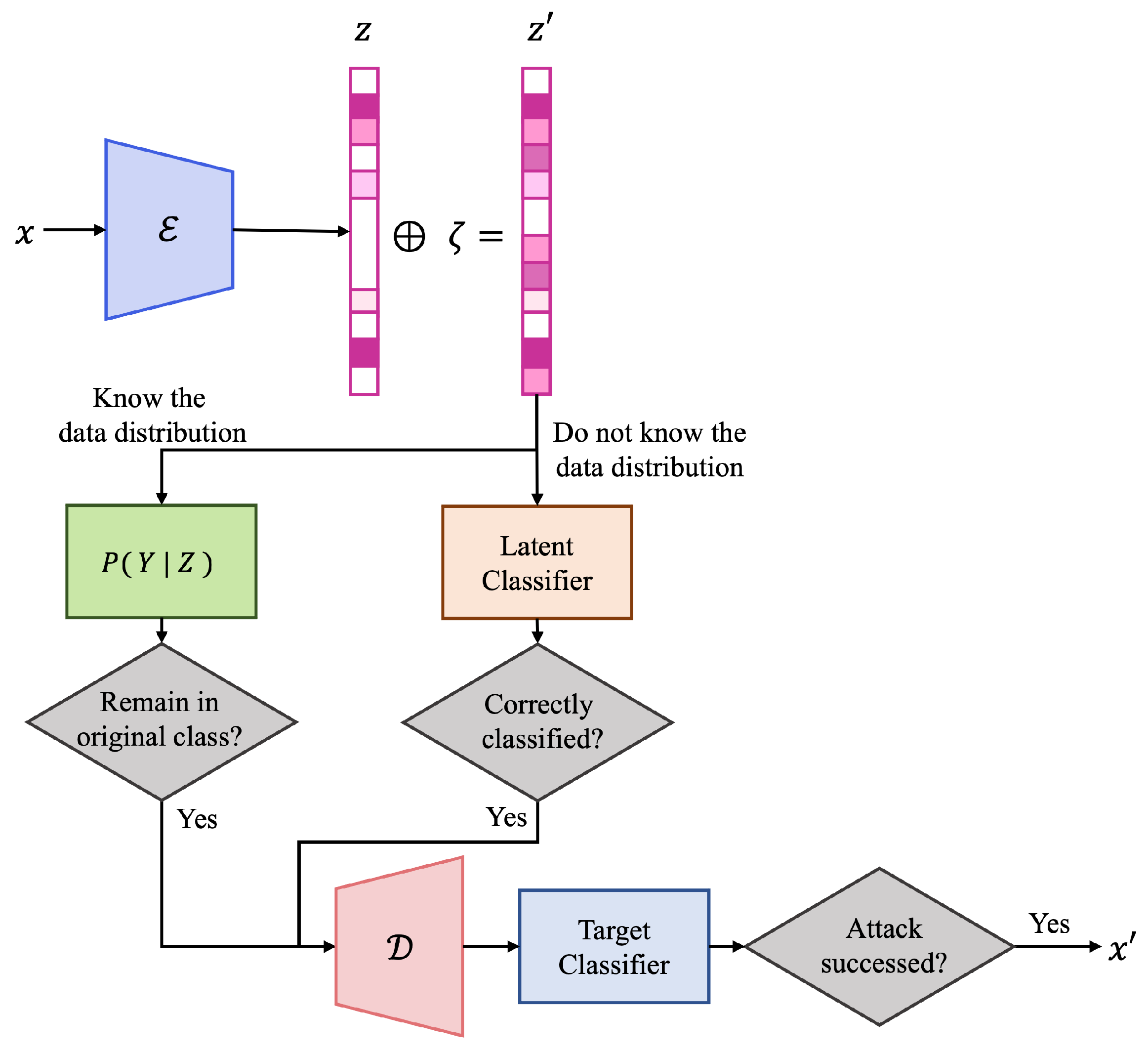

CVPR 2023

ICLR2021 (spotlight)] Benefit of deep learning with non-convex noisy gradient descent

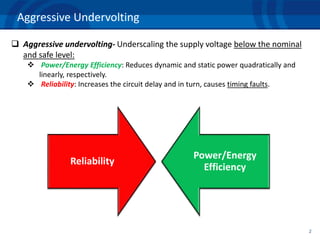

On the Resilience of Deep Learning for reduced-voltage FPGAs

PDF) Information Bound and Its Applications in Bayesian Neural Networks

ICLR 2020

ICLR2021 (spotlight)] Benefit of deep learning with non-convex noisy gradient descent

PAC-Bayesian Bound for Gaussian Process Regression and Multiple Kernel Additive Model

CNN for modeling sentence

Future Internet, Free Full-Text

- Medical Grade vs. Non-Medical Grade Compression Stockings

- non-consensual head compression, Paul Williamson Quintet

- 12-10 AWG NON INSULATED 1/4 COMPRESSION TERMINAL SLIP ON - FEMALE – A&R Supply - Air Conditioning & Refrigeration Wholesaler

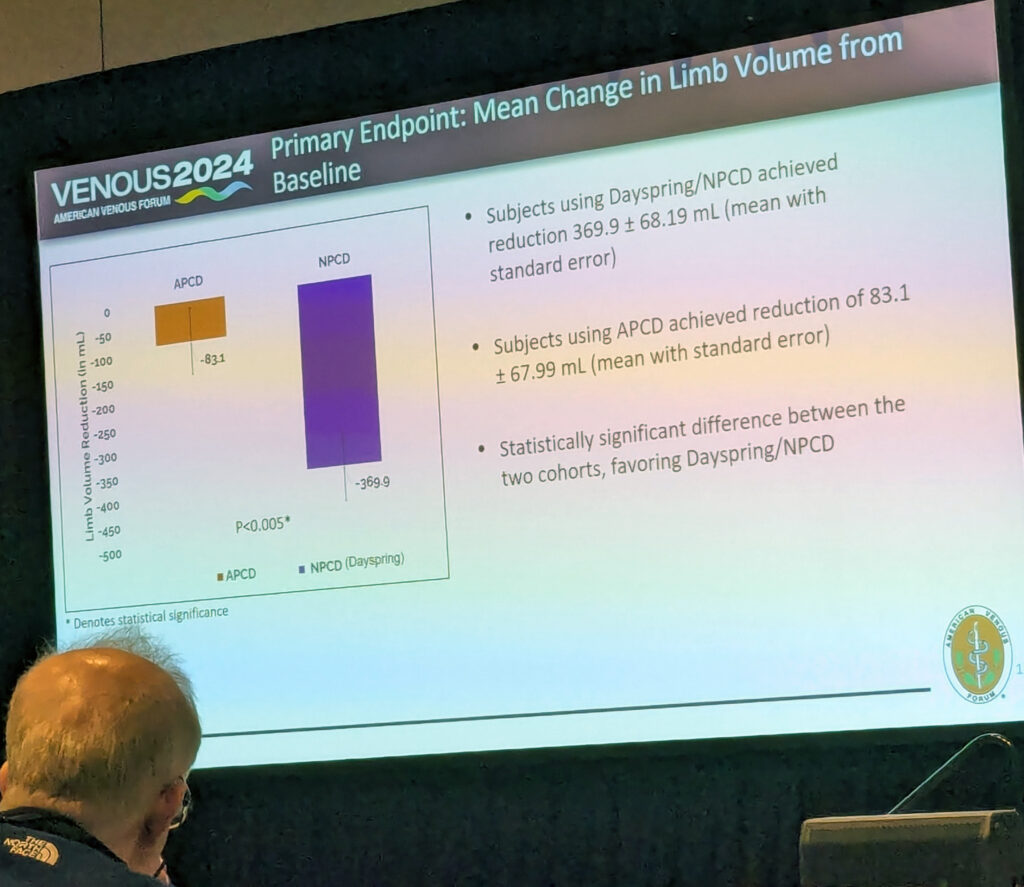

- Portable non-pneumatic compression device shows superiority over

- Al Non-Tension Compression Joint 36 kV with Blind Hole According to DIN - KFAR MENACHEM

- Legging Nike Dri-Fit 7/8 Tight Pink - Intense Fitness

- Addiction Recovery Quote Sobriety 12 Steps AA Gift T-Shirt

- 36DD Racerback Bras

- Sunsets Laney Triangle Women's Swimsuit String Bikini Top with Removable Cups, Andalusia, Small : Clothing, Shoes & Jewelry

- Brawl Stars Store 25CM Brawl Stars Spike Plush Dolls