DeepSpeed Compression: A composable library for extreme

By A Mystery Man Writer

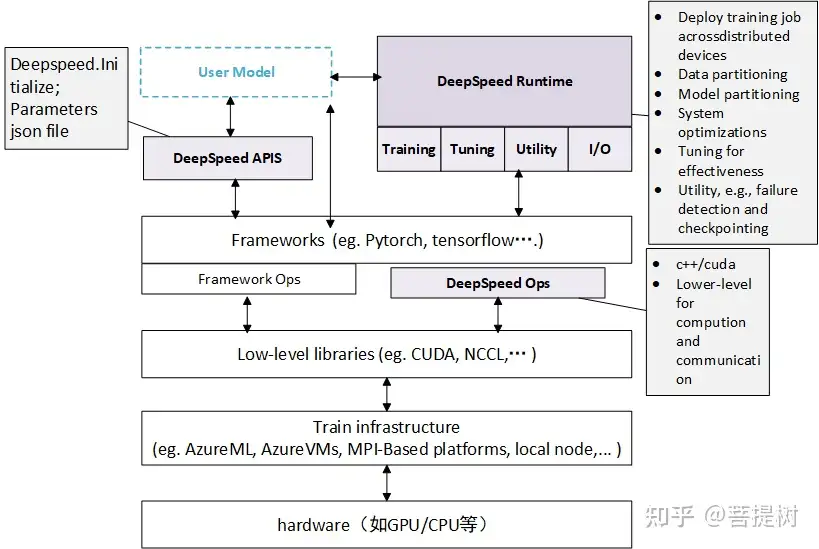

Large-scale models are revolutionizing deep learning and AI research, driving major improvements in language understanding, generating creative texts, multi-lingual translation and many more. But despite their remarkable capabilities, the models’ large size creates latency and cost constraints that hinder the deployment of applications on top of them. In particular, increased inference time and memory consumption […]

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

Michel LAPLANE (@MichelLAPLANE) / X

This AI newsletter is all you need #6 – Towards AI

PDF] DeepSpeed- Inference: Enabling Efficient Inference of Transformer Models at Unprecedented Scale

Shaden Smith on LinkedIn: DeepSpeed Data Efficiency: A composable library that makes better use of…

Optimization approaches for Transformers [Part 2]

Jean-marc Mommessin, Author at MarkTechPost

ZeRO & DeepSpeed: New system optimizations enable training models with over 100 billion parameters - Microsoft Research

如何评价微软开源的分布式训练框架deepspeed? - 菩提树的回答- 知乎

- 10 Denier Tights with Sheer Matt Finish

- VASSARETTE VINTAGE NEW CUSTOMIZED CLEAVAGE TECHNOLOGY BRA 36 A/B/ C- White-Latte

- Yyeselk Womens Casual Hoodies Pullover Tops Drawstring Long Sleeve

- КПБ 2сп Nils н(2)70*70 сатин Melissa New КПБ 2сп Nils н(2)70

- Panache Ana Plunge Bra, Sienna - Bras, Shapewear, Activewear